Formal verification going mainstream for SoC block verification

February 17, 2016

The use of formal verification technology as a mainstream technique for system-on-chip (SoC) designs is, at last, becoming a recognized approach to co...

The use of formal verification technology as a mainstream technique for system-on-chip (SoC) designs is, at last, becoming a recognized approach to combat the verification gap. A recent survey suggests that formal assertion-based verification (ABV) is now used on 26 percent of chip design projects. However, the promise of this alternative approach to classic simulation has taken many years to bear fruit, and still only advanced verification environments incorporate it. Why is this and what can we learn from its use so far to make it available to the SoC engineering community at large?

SoC block verification hitting a wall

Since their inception, SoC devices have presented a verification nightmare to development teams. While the verification of a complete SoC is now a task best left to emulation and rapid prototyping systems, even the larger blocks on these devices have outgrown simulation-only environments.

The advent of emulation, ever-faster simulators, verification intellectual property (VIP) for key tests, and the Universal Verification Methodology (UVM) have all helped to mitigate this. Still, the verification requirement exceeds available processing time in simulation-based environments.

Formal verification has helped to improve block verification through the use of automated “apps” that target specific needs, which otherwise require significant simulation effort. Checking the correct operation of standard communication protocols, ensuring key connections and register operation, analyzing correct startup sequences on domain resets, and many other tasks are now handled by these solutions.

However, we have just begun to tap into the true power of formal verification. Many of its usage issues have been eliminated leaving us at the forefront of what may be an entirely new era in verification, as this technology is deployed for hard-core verification.

Formal verification: If it’s so good, where is it today?

First, a quick review of formal verification technology, why it has the potential to create this fundamental shift, and what is stopping it today.

Hardware simulation works by cycling a block of hardware description language (HDL) code through a serial sequence of meaningful states to demonstrate its operation. This state sequence is driven by input stimulus (an HDL description of a set of events on the input of the device) designed to explore the right states to identify operational issues.

This approach begs the question: If we know all the states a code block can get into and the inter-state transitions, then can’t we simply ask questions about the code operation to ensure it is correct? This would avoid having to write many lines of stimulus to try and get the code block into the correct, information-bearing states. This is the approach used by formal verification tools.

This basic approach can be morphed into a number of useful applications. For example, if the questions to be asked can be created automatically based on an aspect of the design code and a verification scenario to be checked, then an automated app for a verification purpose can be created. This would not require the user to write the question. If the formal tool can demonstrate specific sequences of states (a state machine operation, for instance) with minimal input, then a design engineer can understand how his or her code might execute, revealing possible mistakes.

The real power of formal verification is exercised when engineers ask the questions themselves. This requires the questions, or properties, to be written using assertions and applied to the design in a process known as assertion-based verification orABV.

Of course, this high-level description masks the issues with ABV, including the capacity and performance requirements of a tool that stores this much information are already being solved with the latest technologies.

Two issues remain as barriers for the widespread use ofABV:

- The authoring of assertions, often using the SystemVerilog standard syntax, can be complex and difficult to visualize

- The understanding of verification progress, or coverage, is difficult to comprehend and collate with that of other verification methods

Although improvements have been made in both of these areas, more is required to lower the learning curve, allowing the general proliferation ofABV.

ABV application

There are two common methods that ABV is applied during the verification process. The first is to check for specific, corner case style issues, usually of a nature that would otherwise require a significant effort to build a simulation test bench for the problem to be analyzed. The second is the more general checking of a block, either in conjunction with simulation or standalone.

The first use model for formal verification is valuable and can shave a reasonable percentage off the verification schedule. The second model has the potential to alter the characteristic verification process, saving significant time and resource expenditure, while increasing the overall potential to discover every bug in the design. Already some industry segments are using ABV in this second mode extensively. These include automotive and aeronautical electronics where high quality and reliability is a factor.

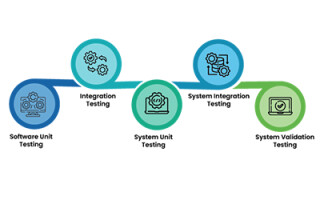

In a combined simulation-formal verification flow, as shown in Figure 1, it is common to use simulation for general operational analysis and to “get a feel” for the design’s behavior and performance. In addition, there are some functions where simulation is more appropriate, such as mathematical data processing or signal processing. However, formal verification is well suited to functions of a control or data transport variety like finite state machines, data communication, and protocol checks. In addition, ensuring certain kinds of verification scenarios, such as Safety Checks (e.g. can a certain activity ever happen), is also a sweet spot for this technology. These code and scenario examples commonly require a high proportion of the verification resource.

Assertion authoring improvements

In the same way that UVM drove a layered approach to simulation test bench creation, new techniques are emerging that introduce abstraction into assertion authoring. These abstractions reduce the complexity by masking assertion detail, while allowing the engineers to think about the verification task rather than individual characteristics of the assertions.

For example, OneSpin Solution’s Operational Assertions is a SystemVerilog library that allows formal tests to be represented in a transactional timing diagram-like manner, not unlike high-level UVM sequences, widely recognized by verification engineers. Breker Verification Systems’ graph-based test sequences, now under consideration by the Accellera Portable Stimulus standards committee, is another abstraction form that could also be applied to assertion authoring.

These techniques, while easing the application of formal tests, have the advantage of providing a recognizable and more natural input scheme, allowing engineers to relate to the verification process underway by eliminating some of the formal verification mystery.

Common coverage models

Simplifying assertions is just one part of the puzzle. At the other end of the process is the collation of coverage information from various sources to understand overall verification progress regardless of the tools used. Simulation progress still is primarily focused on code coverage of one sort or another, with some functional coverage thrown in. Formal verification coverage focuses on assertions (so called “Assertion Coverage”), whether they were executed, did they pass or fail, or did they pass with a caveat (e.g., a bounded proof such as “the code passes within a set number of clock cycles”). This information may be fed back to a verification planning system to provide some useful data.

However, measuring formal coverage, where the actual code tested by specific assertions is identified, is an area of interest to leading formal verification vendors. Schemes have been proposed that vary both in terms of precision and execution resources required. The key is the ability to compare these formal models with that of simulation to provide a combined, meaningful coverage assessment. The Accellera Unified Coverage Interoperability Standard (UCIS) committee is focused on this goal and has proposed methods by which the two may be compared. More work is required in this area, but it is clear that several of the formal verification vendors have solutions that allow a reasonable metric of progress to bec alculated.

Simulation style debug

The debug of formal verification code in a manner that is meaningful to a simulation-centric engineer has, to a large extent, been solved by many of he formal verification vendors. Most tools can output a “witness” in the event of an assertion failure. That is, a sequence of events in the form of a simulation waveform that led to the assertion failure. Indeed, some of the vendors, including OneSpin, can output simulation tests that allow the failure to be reproduced in the simulator for further study.

Cracking the code of mainstream ABV

It is clear that the use of ABV is starting to become mainstream. In recent papers, both ARM and Oracle declared the full power of ABV in their environments and noted that it was now being used at a significant level on their projects.

Solving the three legged stool of Assertion Authoring, Collated Coverage and Simulation-centric Debug, and combining it with a clear understanding of the design areas and scenarios where formal verification excels will propel this approach into the mainstream of SoC verification. Once this happens, it will have a dramatic impact on future design quality and development schedules.

OneSpin Solutions

www.onespin.com

@OneSpinSolution

www.linkedin.com/company/onespin-solutions

![[Figure 1 | A combined simulation and formal verification flow would include simulation for analysis and an impression of how the design will behave and perform.]](https://data.embeddedcomputing.com/uploads/articles/wp/1976/56bd0d637f463-Fig1.jpg)