Bulls, bears, and bunnies: The 6th RISC-V Workshop in Shanghai

May 19, 2017

Blog

Last week, I attended the 6th RISC-V Workshop, held in Shanghai. RISC-V is, of course, the open-source processor architecture invented and introduced...

Last week, I attended the 6th RISC-V Workshop, held in Shanghai. RISC-V is, of course, the open-source processor architecture invented and introduced by the University of California, Berkeley in 2014. The previous workshop, held last November in Silicon Valley, attracted around 350 participants; this workshop about the same. The majority of attendees were from Asia, especially China; not surprising given the Shanghai location.

You’ll understand shortly what’s meant by the terms bulls, bears, and bunnies.

The Bulls

Certainly, the Berkeley crew, most of whom founded SiFive and are working to commercialize RISC-V, are unapologetically enthusiastic about RISC-V. Also enthusiastic was Andes Technology, which has to date developed and sold its proprietary 32-bit processor IP, but announced at the conference that its new 64-bit architecture would be based on RISC-V. Other startups and small companies are also bullish on RISC-V. But they have to be, because at this point in their lifecycles, they’re close to betting their companies on the success of RISC-V.

What are they betting on? Mostly on IoT and “minion” applications, a term coined by the lowRISC team to describe individual processors on SoCs doing infrastructure tasks such as communications management, power management, security, etc. Companies such as Microsemi, Nvidia, and Samsung are either providing chips with RISC-V capabilities (Microsemi FPGAs) or working on SoCs that are either microcontrollers based on RISC-V for the IoT market or larger SoCs with RISC-V minion cores.

The Bears

There weren’t too many bears in attendance, but they’re focused on one area: high-end application processors (real bears wouldn’t even come to this workshop, so calling these people bears is a relative term). If one of the RISC-V goals is to provide royalty-free processor IP, then the high end with the largest royalty streams is the primary beneficiary. However, those high-end processors that they want to replace, namely ARM Cortex-A devices, have lots of features that still aren’t implemented for RISC-V. Things like vector math, hardware virtualization extensions, multi-cluster coherence, and much more. The prognosis among these people is that RISC-V for the high end is still at least 12 months from even semi-serious consideration in SoCs.

The Bunnies

I’m not sure if there’s a term for the cautiously optimistic but still skeptical group, but I’ll call them bunnies here, based on one of the workshop’s keynote speakers, Bunnie Huang (look him up, and you’ll be amused and impressed by the source of his name). Bunnie is well known in the hacker and open-hardware communities, and is cautiously optimistic about the prospect of “open silicon” based on RISC-V. However, open silicon carries a lot of requirements from his community, and he’s not sure this will meet all those requirements. It’s not clear that any technology/flow/ecosystem could meet those requirements, mainly because of the financial and technical difficulties and commitments needed to fab silicon. However, his keynote was good in pointing out a potential evolutionary path in that direction.

Like Bunnie, many in attendance are both cautiously optimistic and skeptical—cautiously optimistic about the technology, skeptical that an ecosystem can achieve critical mass quickly enough to achieve significant market share in the embedded world. These bunnies, though, are at least making some initial investments in the RISC-V ecosystem, such as my company, Imperas, developing Open Virtual Platforms (OVP) processor models with RISC-V cores.

The opening statement of the Imperas presentation at the workshop was “The size of the RISC-V market share will depend more on the software ecosystem than on specifics of RISC-V implementations.” While most of the talks at the workshop focused on RISC-V hardware, deservedly so given the stage of development of RISC-V silicon, Imperas focused on the longer term. ARM’s continuing success is due more to its software ecosystem than to the quality of the ARM-processor IP. The RISC-V community is going to have to make it easy for software engineers to use RISC-V processors to gain significant market share.

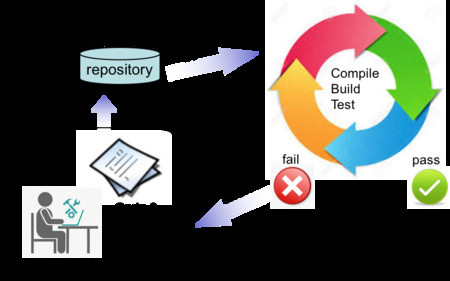

The meat of the Imperas presentation focused on modern embedded software development methodology, specifically on the Continuous Integration Continuous Test (CICT) subset of the Agile methodology. CICT essentially mandates that when an engineer checks in code, an automated compile/build/test process is kicked off. This has numerous benefits, such as forcing engineers to write more modular, less complex code, provides more immediate feedback enabling developers to focus more on high-quality code, reduces major integration bugs, and provides the constant availability of a current build for test, demo, or release purposes. Various CICT management tools are tools available, including both open source (Jenkins) and commercial.

The thing about setting up a CICT flow is that it requires complete automation and significant metrics, such as code coverage, code complexity and feature completeness. Software simulation (virtual platforms) is particularly well suited to such a flow, including having tools that are non-intrusive, requiring no instrumentation or modification of source code.

We’re not quite sure where RISC-V is heading, but it’s an interesting ride at this point. We’ll see what progress has been made when we get to the 7th RISC-V Workshop, to be held November 28-30 in Silicon Valley.

Larry Lapides is a Vice President at Imperas Software. He holds an MBA from Clark University in addition to his MS Applied & Engineering Physics from Cornell University and BA Physics from the University of California Berkeley.